The 1st Workshop & Challenge on Micro-gesture Analysis for Hidden Emotion Understanding (MiGA)

To be held at IJCAI 2023, 19th-25th August 2023, Macao, China

Welcome to MiGA Workshop & Challenge 2023

We jointly hold the first workshop and challenges for Micro-gesture Analysis for Hidden Emotion Understanding (MiGA) on IJCAI 2023, 19th-25th August 2023. We warmly welcome your contribution and participation.News

7 March : Great news! The MiGA workshop has been accepted at IJCAI for 2023!

20 March : The website of MiGA workshop & challenge is avaible.

27 March : The Codalab website of MiGA challenge is avaible.

17 May : The final results of MiGA challenge is avaible, congratulations to winners!

17 May : The submission deadline of MiGA workshop is extended!

Overview

We hold the 1st MiGA Workshop & Challenge to explore using body gestures for hidden emotional state analysis, to be held at IJCAI 2023.

As an important non-verbal communicative fashion, human body gestures are capable of conveying emotional information during social communication. In previous works, efforts have been made mainly on facial expressions, speech, or expressive body gestures to interpret classical expressive emotions. Differently, we focus on a specific group of body gestures, called micro-gestures (MGs), used in the psychology research field to interpret inner human feelings.

MGs are subtle and spontaneous body movements that are proven, together with micro-expressions, to be more reliable than normal facial expressions for conveying hidden emotional information.

The aim of our MiGA workshop & challenge is to build a united, supportive research community for micro-gesture analysis and related emotion understanding problems. It will facilitate discussions between different research labs in academia and industry, identify the main attributes that can vary between gesture-based emotional understanding, and discuss the progress that has been made in this field so far, while identifying the next immediate open problems the community should address. We provide two different datasets and related benchmarks and with to inspire a new way of utilizing body gestures for human emotion understanding and bring a new direction to the emotion AI community.

Workshop Topics

-

The workshop supplementing the challenge covers a wider scope, i.e., any paper that is related to gesture and micro-gesture analysis for emotion understanding but is not directly about any of the challenge tracks can be submitted as a workshop paper. The topic includes but is not limited to:

- • Gesture and micro-gesture analysis for emotion understanding.

- • Vision-based methodologies for gesture-based emotion understanding, e.g., classification, detection, online recognition, generation, and transferring.

- • Solutions for special challenges involved with the in-the-wild gesture analysis, e.g., severely imbalanced sample distribution, high heterogeneous samples of interclass, noisy irrelevant motions, noisy backgrounds, etc.

- • Different modalities developed for emotion understanding, e.g., body gestures, attentive gazes, and desensitized voices.

- • New data collected for the purpose of hidden emotion understanding.

- • Psychological study and neuroscience research about various body behaviors and their links to emotions.

- • Hardware/apparatuses/imaging systems developed for the purpose of hidden emotion understanding.

- • Applications of gestures and micro-gestures, e.g., for medical assessment in hospitals (ADHD, depression), for health surveillance at home or in other environments, for emotion assessment in various scenarios like for education, job interview, etc.

References

- Chen H., Shi H., Liu X., Li X., and Zhao G. SMG: A Micro-gesture Dataset Towards Spontaneous Body Gestures for Emotional Stress State Analysis. International Journal of Computer Vision (IJCV 2023), 2023: 1-21.

- Liu, X., Shi H., Chen H., Yu Z., Li X., and Zhao G. "iMiGUE: An identity-free video dataset for micro-gesture understanding and emotion analysis." In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2021), pp. 10631-10642. 2021.

- Chen H., Liu X., Li X., Shi H., & Zhao G. Analyze spontaneous gestures for emotional stress state recognition: A micro-gesture dataset and analysis with deep learning. 2019 14th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2019): 1-8.

Workshop Details

Body gestures are an important form to reveal people’s emotions alongside facial expressions and speeches. For special occasions when people intend to control or hide their true feelings, e.g., for social etiquette or other reasons, body gestures are harder to control and thus the more revealing clues of actual feelings compared to the face and the voice. Microgestures (MG) are defined as a special category of body gestures that are indicative of humans’ emotional status. Representative instances include: scratching head, touching nose, and rubbing hands, which are not intended to be shown when communicating with others, but occur spontaneously due to e.g., felt stress or discomfort as shown in Figure 1. The MGs differ from indicative gestures which are performed on purpose for facilitating communications, e.g., using gestures to assist verbal expressions during a discussion. Although research on general body gestures is prevailing, it is largely about human’s macro body movement, lacking the finer level consideration such as the micro-gestures discussed above. As well the studies are mainly concerned with recognizing the movement performed expressively, and the link between gestures and hidden emotions is yet to be explored.

Fig. 1. Micro-gesture examples. A tennis player is talking while spontanously performing the body gestures in the post-match interview.

Motivated by the above observations, we propose to jointly hold the first MiGA challenge and workshop on micro-gesture analysis for hidden emotion understanding (MiGA) to fill the gap in the current research field. The MiGA workshop and challenge aim to promote research on developing AI methods for MG analysis toward the goal of hidden emotion understanding. As the proposed MiGA will be the first event, we propose to hold it in workshop + challenge mode with a focus on the competition part that builds and provides benchmark datasets and a fair validation platform for researchers working in the MG classification and online recognition for identity-insensitive emotion understanding. The workshop covers a wider scope than the challenge, i.e., any research that provides theoretical and practical support for gesture and micro-gesture analysis and emotion understanding.

-

The workshop supplementing the challenge covers a wider scope, i.e., any paper that is related to gesture and micro-gesture analysis for emotion understanding but is not directly about any of the challenge tracks can be submitted as a workshop paper. The topic includes but is not limited to:

- • Gesture and micro-gesture analysis for emotion understanding.

- • Vision-based methodologies for gesture-based emotion understanding, e.g., classification, detection, online recognition, generation, and transferring.

- • Solutions for special challenges involved with the in-the-wild gesture analysis, e.g., severely imbalanced sample distribution, high heterogeneous samples of interclass, noisy irrelevant motions, noisy backgrounds, etc.

- • Different modalities developed for emotion understanding, e.g., body gestures, attentive gazes, and desensitized voices.

- • New data collected for the purpose of hidden emotion understanding.

- • Psychological study and neuroscience research about various body behaviors and their links to emotions.

- • Hardware/apparatuses/imaging systems developed for the purpose of hidden emotion understanding.

- • Applications of gestures and micro-gestures, e.g., for medical assessment in hospitals (ADHD, depression), for health surveillance at home or in other environments, for emotion assessment in various scenarios like for education, job interview, etc.

Papers must comply with the CEURART paper style (1 column) and can fall in one of the following categories:

Full research papers(minimum 7 pages)

Short research papers(4-6 pages)

Position papers(2 pages)

The CEURART template can be found on this Overleaf link .

Accepted papers (after blind review of at least 3 experts) will be included in a volume of the CEUR Workshop Proceedings. We are also planning to organize a special issue and the authors of the most interesting and relevant papers will be invited to submit and extended manuscript.

Workshop submissions will be handled by CMT submission system; the submission link is as follows: Paper Submission . All questions about submissions should be emailed to chen.haoyu at oulu.fi

- June 02, 2023. Paper submission deadline.

- June 06, 2023. Notification to authors.

- June 10, 2023. Camera-ready deadline.

- August, 2023. MiGA IJCAI 2023 Workshop, Macao, China.

Note: Each paper must be presented on-site by an Author/co-Author at the conference.

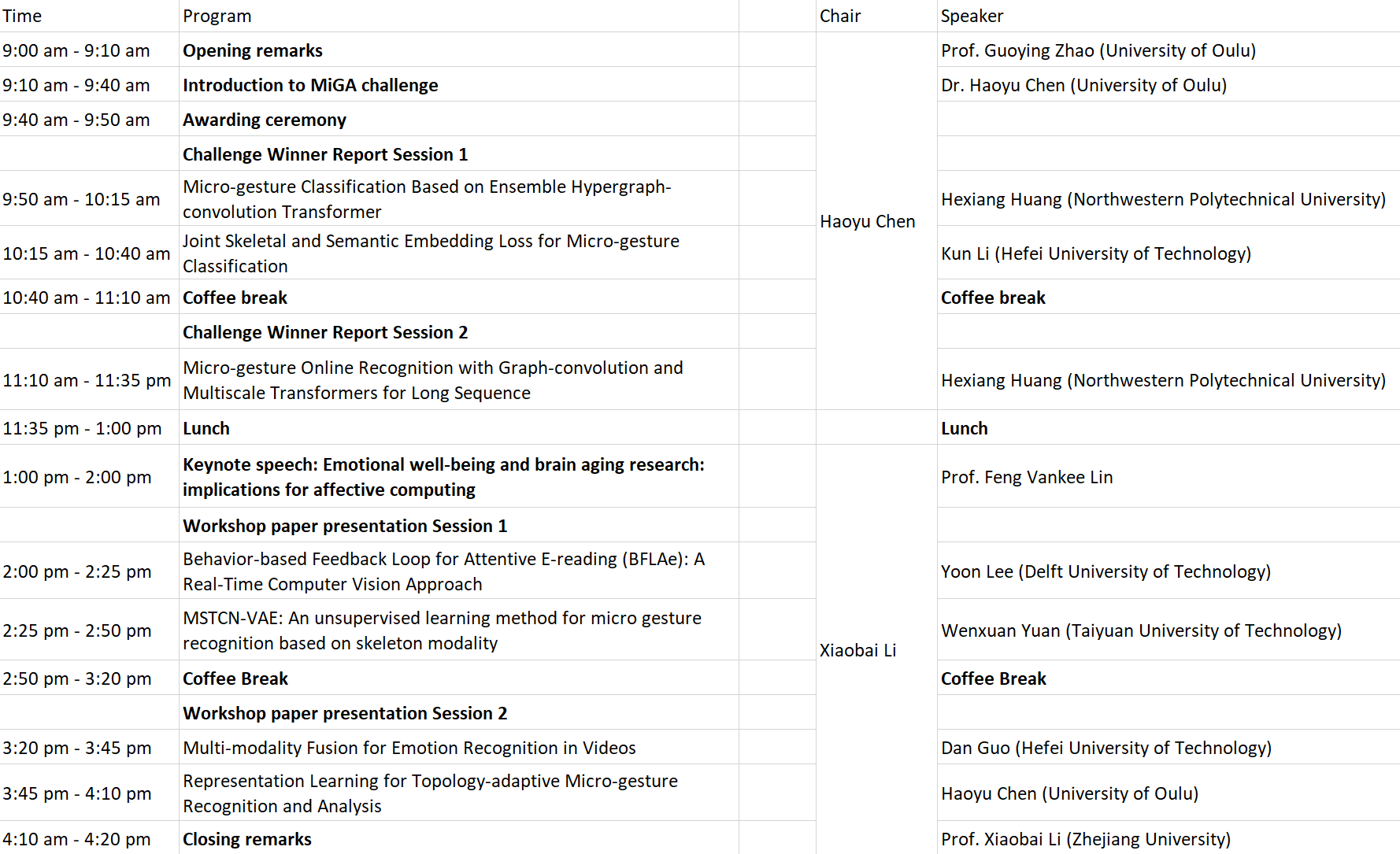

The proposed workshop (including the challenge) will be held as a full-day event, August 21th, 2023.

Challenge Details

The MiGA challenge is planned as a continuous, annual event, and each years MiGA will include different (multiple) challenge tasks, with large-scale datasets with expanding size. As it is the first year of MiGA, we will focus on two fundamental tasks of micro-gestures: classification and online recognition. The tracks of MiGA will be extended to leveraging those identity-insensitive cues to achieve hidden emotion understanding in the future. The challenge will be based on two spontaneous datasets: One is the SMG dataset published in FG2019 “Analyze Spontaneous Gestures for Emotional Stress State Recognition: A Microgesture Dataset and Analysis with Deep Learning” and the other is the iMiGUE dataset published in CVPR2021 “iMiGUE: An Identity-free Video Dataset for Micro-Gesture Understanding and Emotion Analysis”. As it is the 1st MiGA, we plan to set two fundamental challenge tasks (Tracks), and participating teams can choose to compete on one or both tasks. The challenge will be organized on the CodaLab website.

Track 1: skeleton-based micro-gesture classification from short video clips. The MG datasets were collected from in-the-wild settings. Compared to ordinary action/gesture data, MGs concern more fine-grained and subtle body movements that occur spontaneously in practical interactions. Thus, learning those fine-grained body movement patterns, handling imbalanced sample distribution of MGs, and distinguishing the high heterogeneous MG samples of interclass are the big challenges to be addressed.

Track 2: skeleton-based online micro-gesture recognition from long video sequences. Unlike any existing online action/gesture recognition datasets in which samples are well aligned/performed in the sequence, MGs samples occur spontaneously in any combinations or orders just like seen in daily communicative scenarios. Thus, the task of online micro-gesture recognition requires dealing with more complex body-movement transition patterns (e.g., co-occurrence of multiple MGs, incomplete MGs and complicated transitions between MGs, etc.) and detecting fine-grained MGs from irrelevant/context body movements, which poses new challenges that havent been considered in previous gesture research.

The rules and guidelines for the competition/challenge

1. DatasetsDatasets for the proposed challenge are available. The MiGA challenge is planned as a continuous annual event. Two benchmark datasets published on FG 2019 and CVPR 2021 are available and will be used for the challenge. The first one is the SMG dataset published in FG2019. Spontaneous Micro-Gesture (SMG) dataset consists of 3,692 samples of 17 MGs. The MG clips are annotated from 40 long video sequences (10-15 minutes) with 821,056 frames in total. The datasets were collected from 40 subjects while narrating a fake and real story to elicit the emotional states. The participants are recorded collected by Kinect resulting in four modalities, RGB, 3D skeletal joints, depth and silhouette. In this workshop, we only allow participants to use the skeleton modality. Download SMG dataset

The second dataset is iMiGUE published in CVPR2021. Micro-Gesture Understanding and Emotion analysis (iMiGUE) dataset consists of 32 MGs plus one non-MG class collected from post-match press conferences videos of famous tennis players. The dataset consists of 18,499 samples of MGs to detect negative and positive emotions. The MG clips are annotated from 359 long video sequences (0.5-26 minutes) with 3,765,600 frames in total. The dataset contains RGB modality and 2D skeletal joints collected from Open-Pose. In this workshop, we only allow participants to use the skeleton modality. Download iMiGUE dataset

Note that: 1) not all data are used for the challenge. 2) part of the data will be selected and tailored for different challenge tasks, 3) planned as a continuous event, MiGA will contain multiple parallel challenge tracks and the tasks vary in each year.

2. Evaluation

Training datasets include iMiGUE and SMG datasets while the testing dataset (without annotation released) is from iMiGUE. We deploy a cross-subject evaluation protocol by dividing the 72 subjects into a training group of 37 subjects and a testing group of 35 subjects. For MG classification track, 13,936 and 3,692 MG clips from iMiGUE and SMG279 datasets will be used for training and validating, and the remaining 4,563 MG clips from iMiGUE will be used for testing. For MG online recognition track, 252 and 40 long sequences from iMiGUE and SMG datasets will be used for training and validating, and the remaining 104 long sequences from iMiGUE will be used for testing.

MG classification track: We report Top-5 accuracy on the following subsets of the test set: 1) Overall: All segments in the test split; 2) Tail Classes: Due to the long-tailed nature of the datasets, among a total 33 classes in iMiGUE dataset, 28 classes are tail classes (approx. 57.8of the data). Since there is considerable overlap between consecutive fine-grained MG segments, we use Top-5 accuracy instead of the standard Top-1. Submissions will be ranked on the basis of Top-5 accuracy on the overall split.

MG online recognition track: We jointly evaluate the detection and classification performances of algorithms by using the F1 score measurement defined below: F1 =2*Precision*Recall/(Precision+Recall), given a long video sequence that needs to be evaluated, P recision is the fraction of correctly classified MGs among all gestures retrieved in the sequence by algorithms, while Recall (or sensitivity) is the fraction of MGs that have been correctly retrieved over the total amount of annotated MGs.

Submission format for both tracks. Participants must submit their predictions in the following format. For each sequence SequenceXXXX.zip in the data folder, participants should create a SequenceXXXX prediction.csv file with a line for each predicted gesture [GestureID, StartFrame, EndFrame] (the same format as SequenceXXXX labels.csv) (for classification track, the StartFrame and EndFrame are empty). The predictions for all the samples will be put in a single ZIP file and submitted to Codalab. The results will be evaluated on the server and displayed on the ranking list in real-time. The organization team has the right to examine the participants source code to ensure the reproducibility of the algorithms. The final results and ranking will be confirmed and announced by organizers.

Participation guidelines

Please visit the Codalab websites to join the competitions:The 1st MiGA-IJCAI Challenge Track 1: Micro-gesture Classification

The 1st MiGA-IJCAI Challenge Track 2: Micro-gesture Online Recognition

Final results are available

We have examined all source code submitted and finalized the rankings of the MiGA challenge. Congratulations to the following competitors on their final rankings:Track 1: Micro-gesture Classification

1. gkdx2, team name 'HFUT-VUT'

2. HHuang, team name 'NPU-Stanford'

3. ChenxiCui

Track 2: Micro-gesture Online Recognition

1. HHuang, team name 'NPU-Stanford'

2. gkdx2, team name 'HFUT-VUT'

Important Dates (might slightly adjust later)

-

The timeline for the Challenge will be organized as follows:

- Mar 27, 2023. Call for Challenge online. Registration starts.

- Mar 31, 2023. Release of training data.

- May 2, 2023. Release of testing data.

- May 12, 2023. Final testing data and result submission. Registration ends.

- May 15, 2023. Release of challenge results.

- June 02, 2023. Paper submission deadline (workshop).

- June 06, 2023. Notification to authors.

- June 10, 2023. Camera-ready deadline.

- August, 2023. MiGA IJCAI 2023 Workshop, Macao, China.

Organizing Committee

Guoying Zhao, University of Oulu, Finland.

Björn W. Schuller, University of Augsburg, Germany; Imperial College London, London/UK.

Ehsan Adeli, Stanford University, USA.

Tingshao Zhu, Institute of Psychology, Chinese Academy of Sciences.

Invited speakers

Feng Vankee Lin, Stanford University, USA.

Data chairs

Haoyu Chen (leading data chair), University of Oulu, Finland.

Henglin Shi, University of Oulu, Finland.

Xin Liu, Lappeenranta-Lahti University of Technology LUT, Finland.

Xiaobai Li, University of Oulu, Finland & School of cyber science and technology, Zhejiang university, China.

Welcome to our Telegram group and discuss with peers.

-

The contact info is listed as follows:

- • For questions regarding workshop submissions and the competition, please get in touch with chen.haoyu at oulu.fi.

- • For questions about the workshop local arrangements and information, please get in touch with local@ijcai-23.org.

- • For questions regarding general issue of the workshp program, please get in touch with guoying.zhao at oulu.fi.